Chapter 1: Basic Request Flow

2 min readThe fundamental request flow pattern in distributed systems.

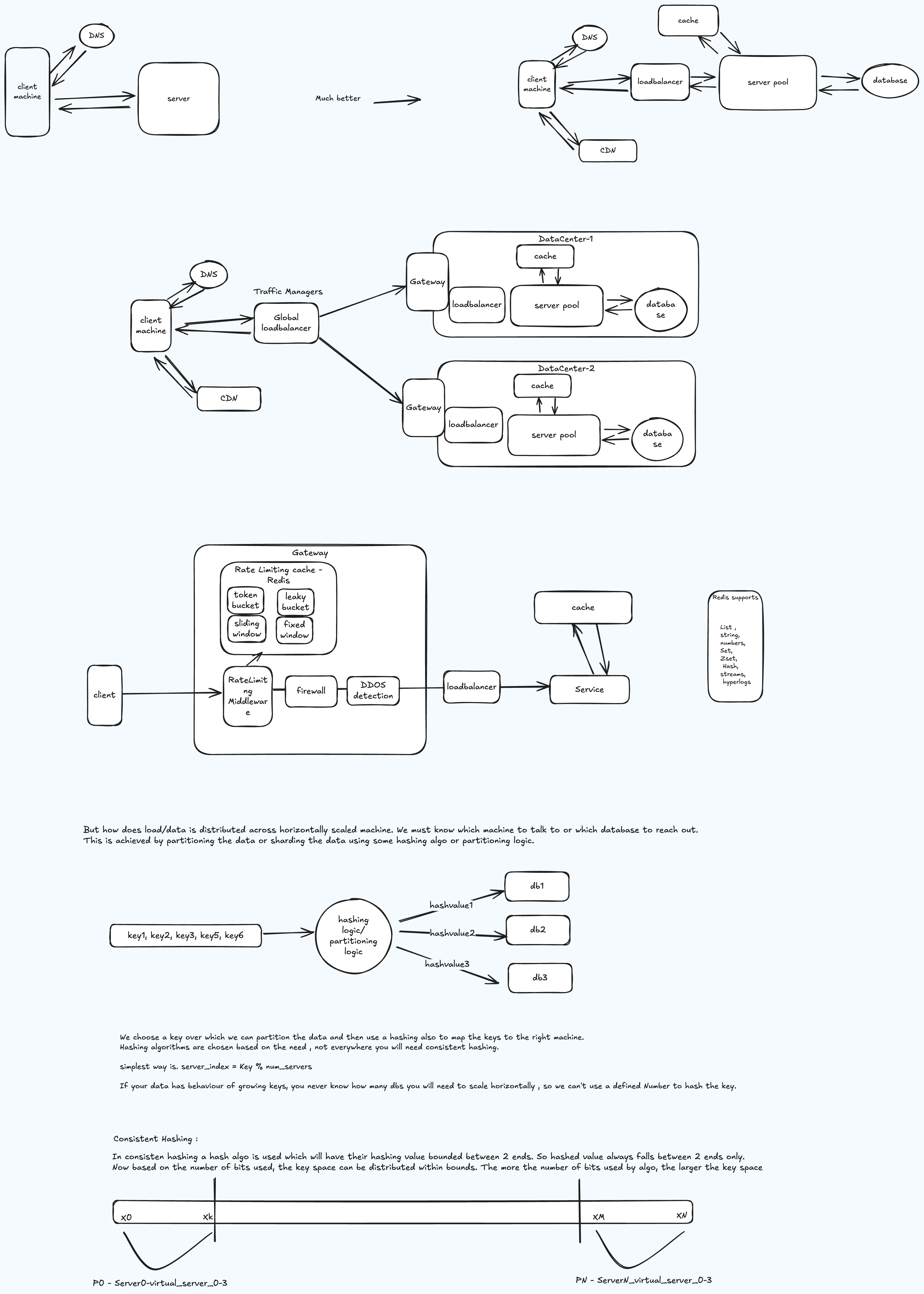

Understanding the basic request flow is fundamental to every distributed system design. The diagram below illustrates the common path a request takes from client to server and back, touching load balancing, service processing, data storage, and caching layers.

Request Flow Diagram

Interactive Architecture Explorer

Click any component below to see detailed explanations of its role, how it connects to other components, and real-world implementation choices.

Loading interactive diagram...

What to Observe

- Client → Load Balancer — The request enters through a load balancer that distributes traffic across service instances.

- Load Balancer → Service — The selected service instance picks up the request for processing.

- Service → Cache — Before hitting the database, the service checks the cache for frequently accessed data.

- Service → Database — On a cache miss, the service queries the database and optionally populates the cache for future requests.

- Response Path — The response travels back through the same layers to the client.

Key Takeaways

- Every additional network hop adds latency — minimize them where possible

- Caching dramatically reduces database load for read-heavy workloads

- Load balancers enable horizontal scaling by adding more service instances

- This basic pattern applies to the majority of system design interview questions

Related Content

Understanding System Design Interviews

Fundamentals and interview mindset for system design.

3 min readdocsTechCatalogue

A collection of notes, guides, and reference material covering core software engineering topics.

1 min readdocsThe Basic Toolbox

Building blocks, CAP theorem, and patterns for system design interviews.

3 min readdocsSelecting an Architecture

Monolithic vs distributed patterns and how to choose.

5 min read